XDesign is an interactive platform that provides step-by-step instructions to prompt learners to create an interactive UI prototype that presents explanations. XDesign gives specific tasks to students in each step, which are: (1) implement the LIME explainer from skeleton code, (2) generate and observe explanations for images that learners upload, (3) categorize the explanations based on whether it is comprehensive or not, (4) discuss the limitations of the LIME explainer with concrete evidence found from the previous steps, (5) come up with a few representative questions that supplement the limitations, and (6) design an interactive UI prototype that answers the questions.

The first step of XDesign is for learners to implement LIME explainer, a model-agnostic algorithm for generating explanations by identifying an interpretable model trained by data with an interpretable representation that locally approximates the original model. As implementing the whole algorithm from scratch could be challenging for learners, XDesign provides detailed instructions on how LIME explainer works and skeleton code so that learners can easily finish the implementation of LIME explainer. The skeleton code can be found here. Using JupyterHub, XDesign provides a Jupyter Notebook environment for individual learners with necessary libraries installed so that learners can only focus on implementations without dealing with development environment.

After finishing the implementation, XDesign asks learners to upload images to observe generated explanations. By experimenting with various images, learners can test their own hypothesis on limitations of LIME explainer such as "generated explanations would not make sense when there are many shapes inside an image such as a keyboard".

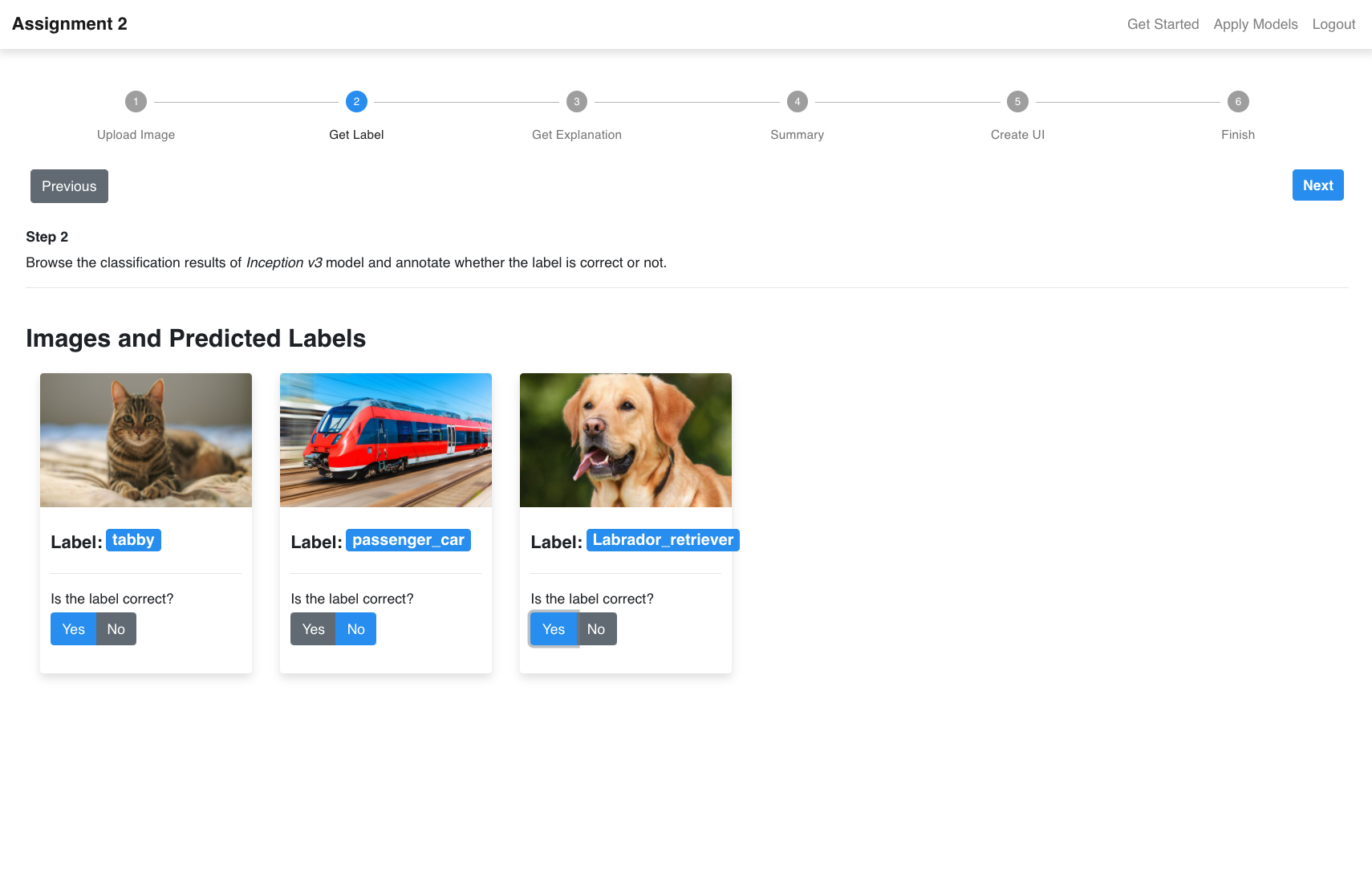

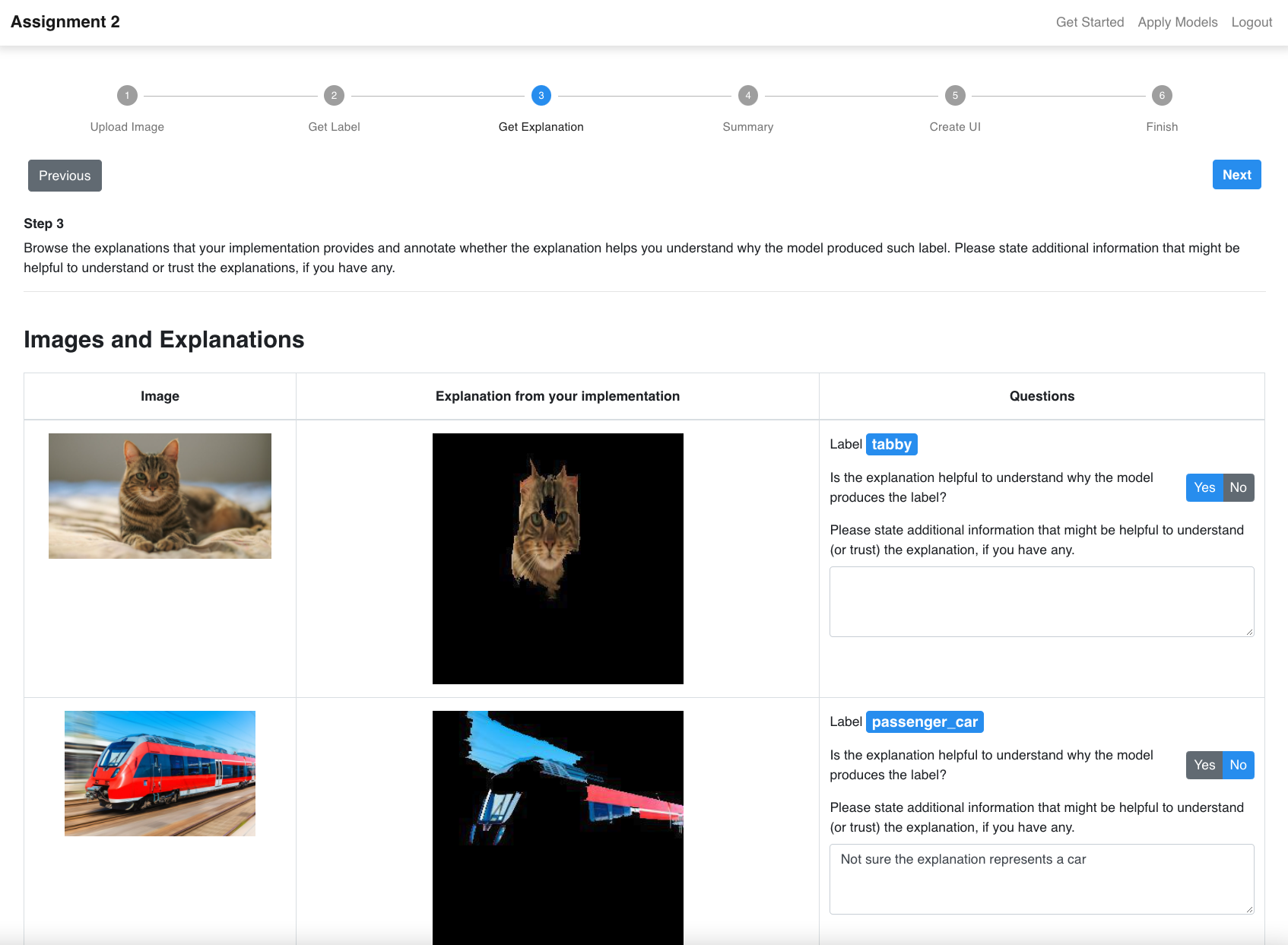

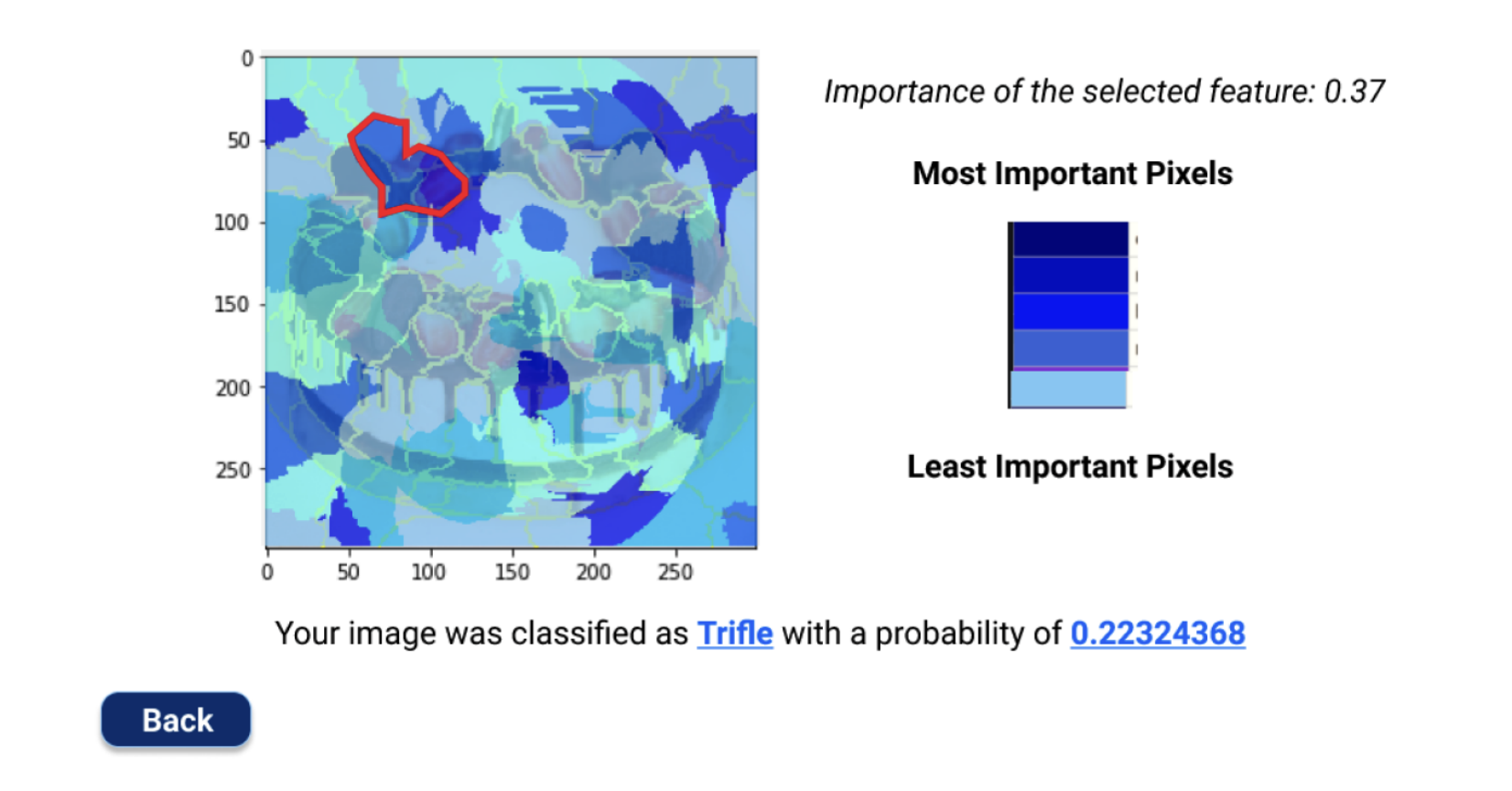

In this step, learners can browse image classification results (predicted by Inception-v3 model, the screen on the left) and the corresponding explanations (generated by the learners’ implementation of LIME explainer, the screen on the right) of their test images. Then the system asks learners yes/no questions about the classification result and explanation of each image: (1) whether the classification results are correct and (2) whether the explanations are helpful to understand the prediction result.

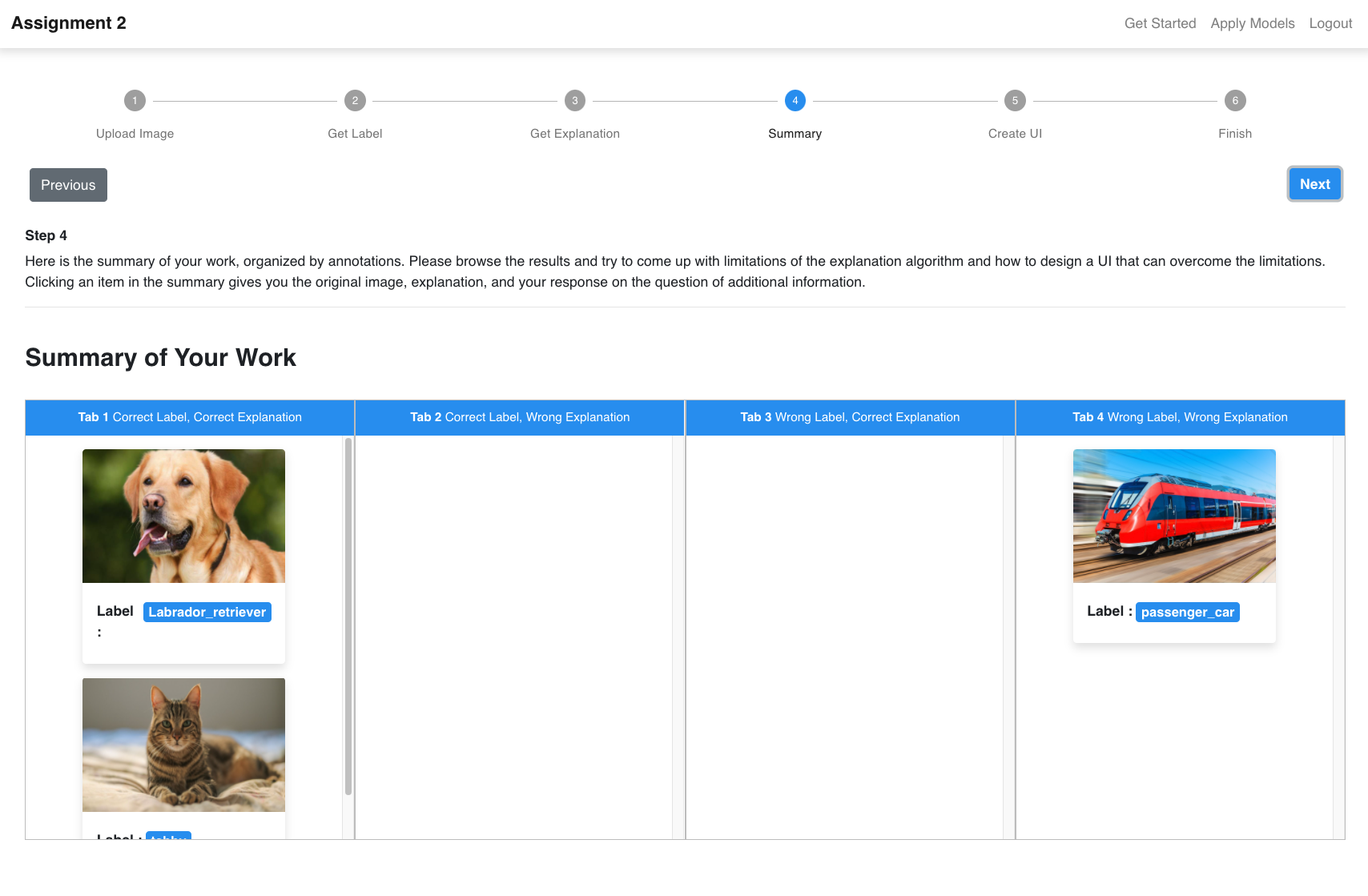

With the uploaded images, computed explanations, and learners’ answers submitted in the previous steps, XDesign presents a summary of results to encourage learners to organize their observations and identify the limitations of the LIME explainer. XDesign shows a table with four columns with respect to whether classification results are correct or incorrect and whether explanations are helpful or not. By going through multiple observations. With the summary, Learners can investigate patterns of when the explanations make sense and not.

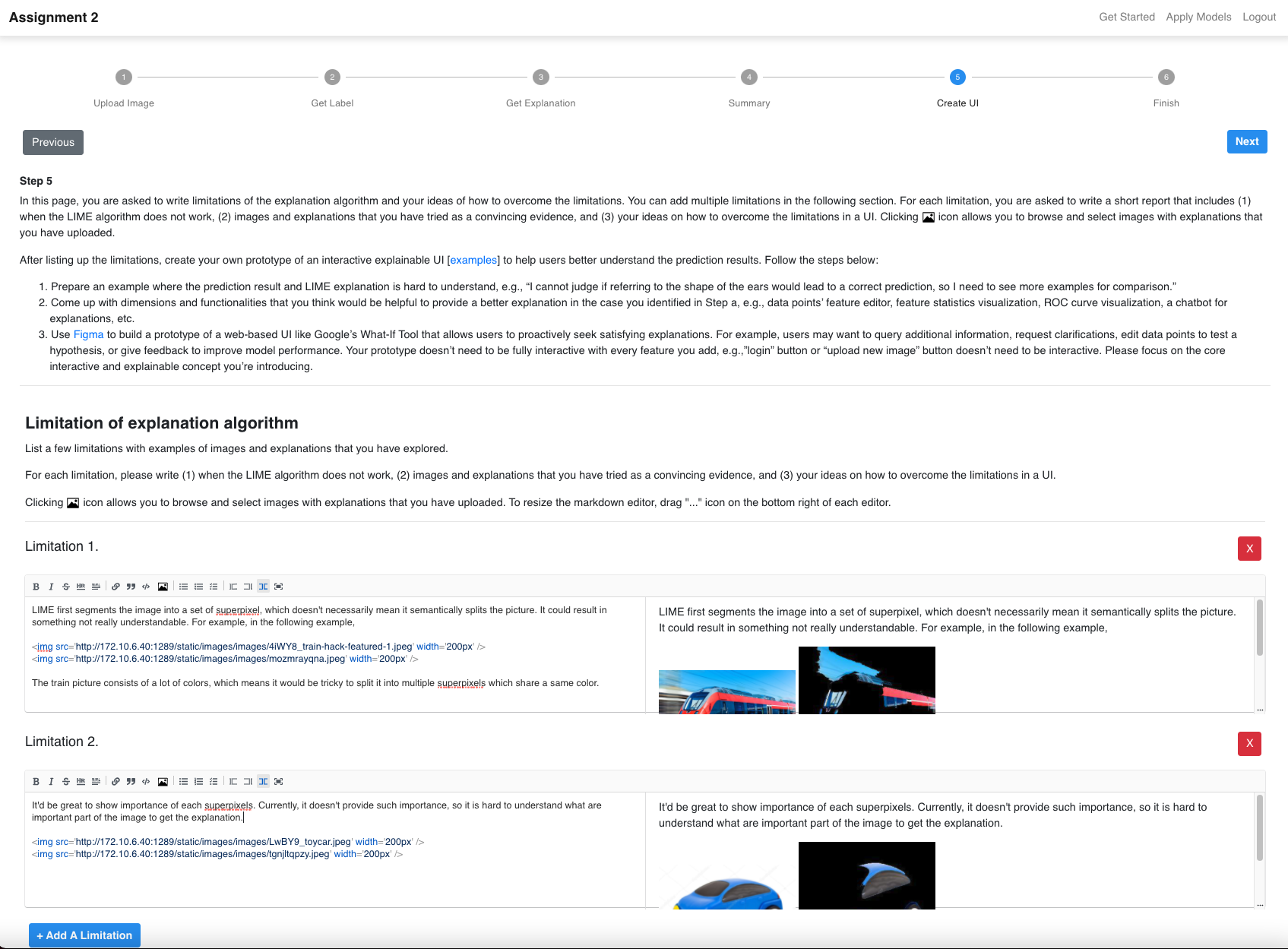

In this stage, learners are asked to write down the limitations of LIME explainer. Specifically, XDesign asks learners to (1) write when LIME explainer does not present comprehensive explanations, (2) attach images and explanations that they observed as convincing evidence, and (3) submit ideas of how to overcome the limitations. XDesign provides a Markdown editor to write the limitations. XDesign encourages learners to attach their observations as evidneces to make their arguments more grounded.

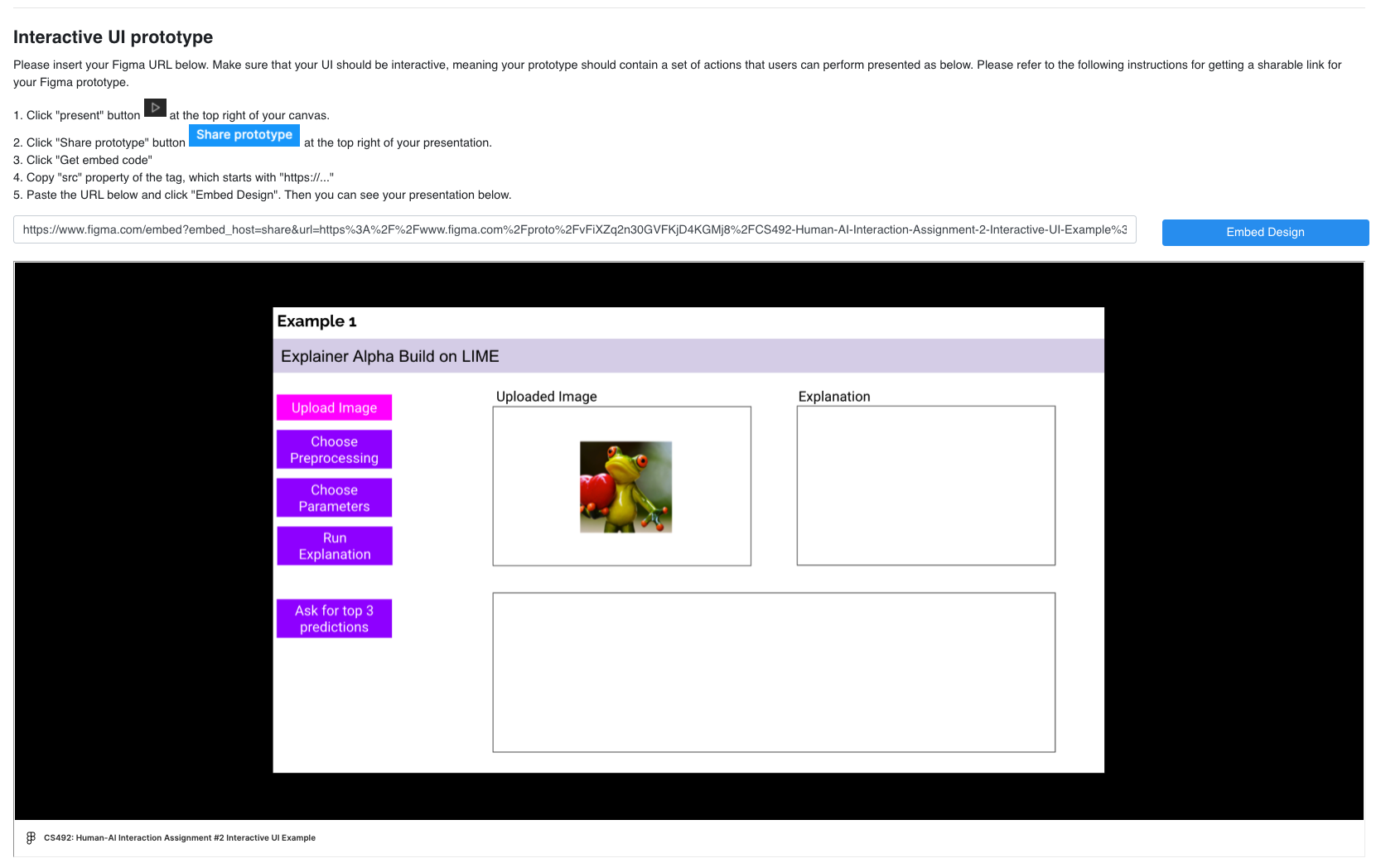

After writing down the limitations, XDesign asks learners to list up a few representative questions that could supplement the limitations, which corresponds to questions users might have in the question-driven XAI design process. Then XDesign asks learners to create a user interface prototype that answers the questions using Figma, a web-based UI prototyping tool. XDesign asks learners to submit the URL of the Figma prototype.

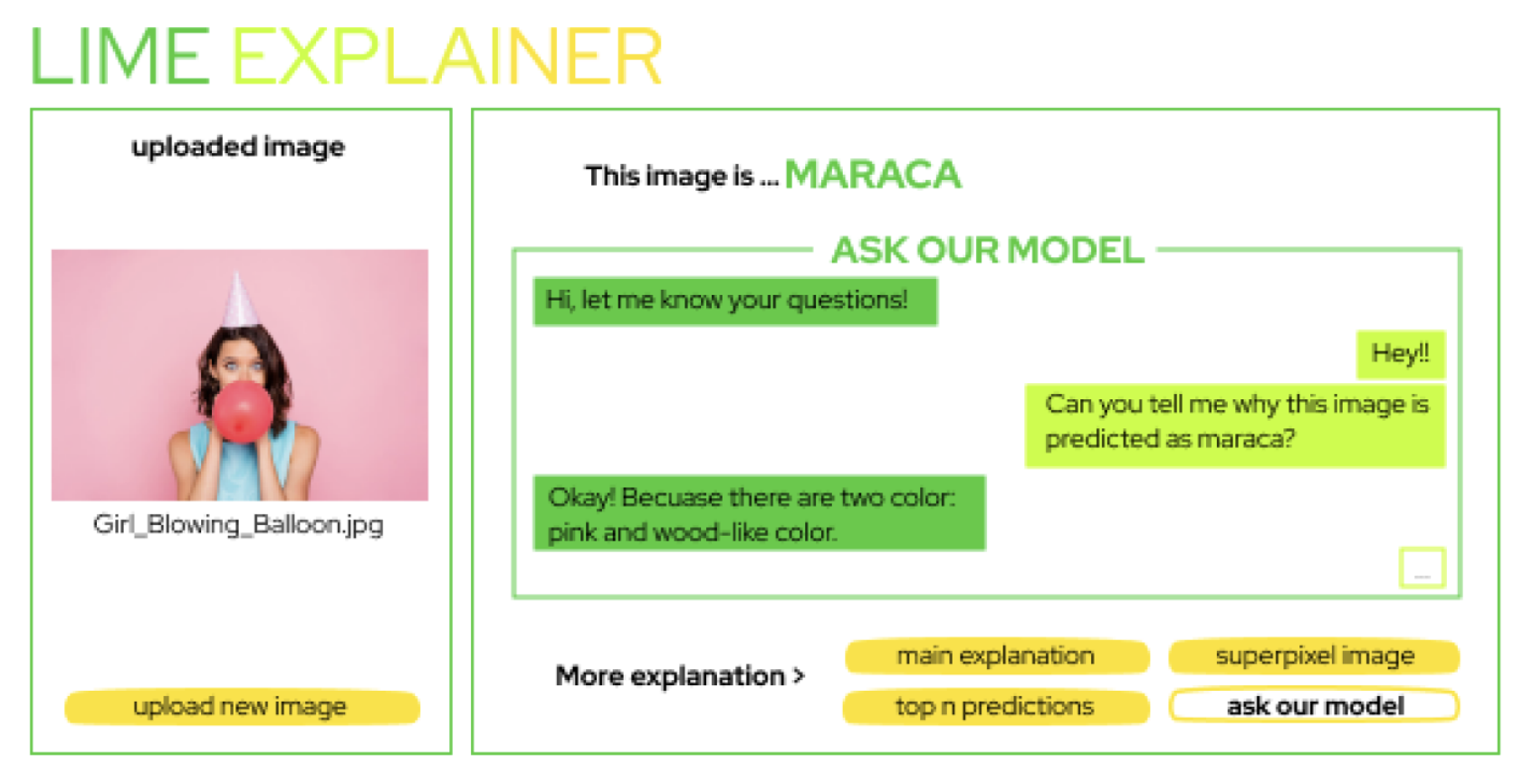

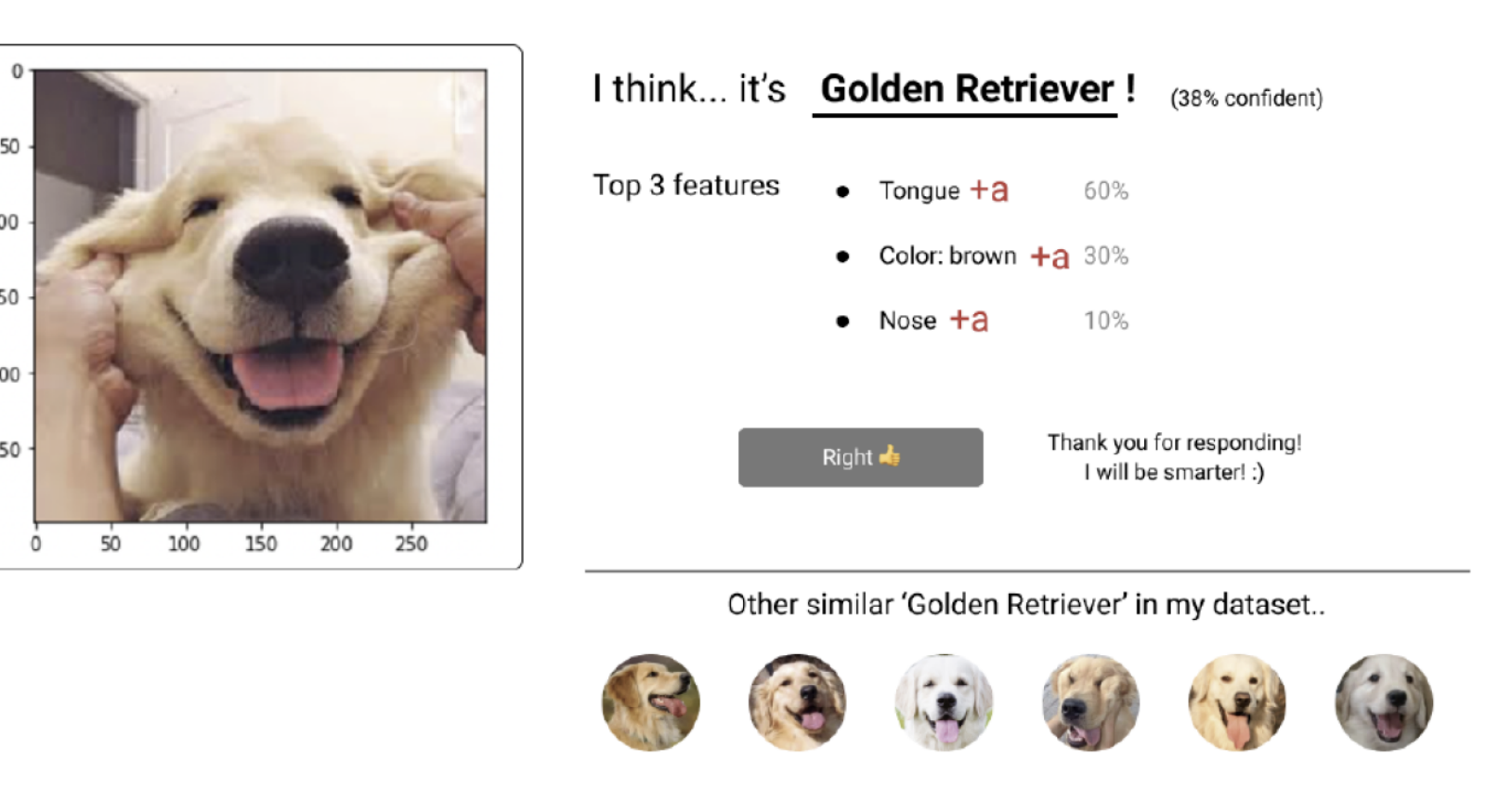

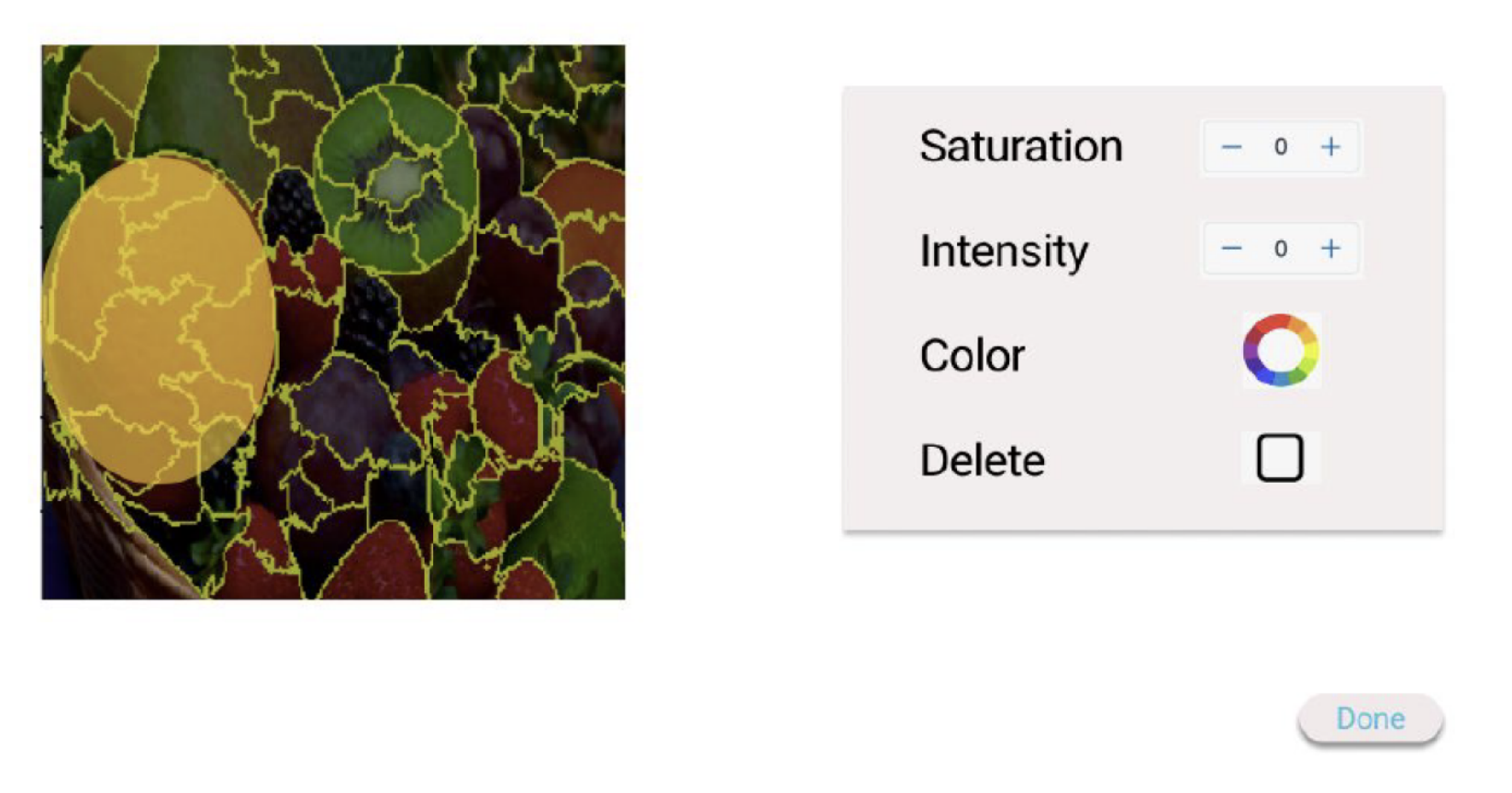

We deployed XDesign to a Human-AI interaction course at KAIST. XDesign was deployed as a part of an assignment where the objective of the assignment was to (1) try off-the-shelf tools for explanations, (2) discuss strengths and limitations of the tools, and (3) design a user interface prototype that provides usercentered explanations which can address the limitations. The following shows examples of students' submissions as more usable explanations.

A chatbot that allows users to

A chatbot that allows users to  An interface that collects user feedback and

An interface that collects user feedback and  An image manipulation interface to

An image manipulation interface to  An interface that shows the importance of each pixel as well as accuracy and labeling results

An interface that shows the importance of each pixel as well as accuracy and labeling results

@inproceedings{shin2022xdesign,

title={XDesign: Integrating Interface Design into Explainable AI Education},

author={Shin, Hyungyu and Sindi, Nabila and Lee, Yoonjoo and Ka, Jaeryoung and Song, Jean Y and Kim, Juho},

booktitle={Proceedings of the 53rd ACM Technical Symposium on Computer Science Education V. 2},

pages={1097--1097},

year={2022}

}